Click on below given link to download the document:

2: Important_Frequently_Asked_PL/SQL_Questions_With_Answers-Set-2(Part-A)

Password For Opening Document : Airydba@159

Password For Opening Document : Airydba@159

[oracle@paw-prim ~]$ sqlplus / as sysdba

SQL*Plus: Release 12.2.0.1.0 Production on Mon Mar 6 00:33:16 2017

Copyright (c) 1982, 2017, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup mount

ORACLE instance started. Total System Global Area 1610612736 bytes Fixed Size 2924928 bytes Variable Size 1040191104 bytes Database Buffers 553648128 bytes Redo Buffers 13848576 bytes Database mounted. Database open.

SQL> select dest_id,status,error from v$archive_dest where status<>’INACTIVE’;

DEST_ID STATUS ERROR ----------------------------------------------------------------- 1 VALID 2 VALID

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ----------------------------------------------------------------------------------- 1 VALID OPEN LOCAL IDLE

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=2;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ------------------------- --------------------------------------------------------- 2 VALID MOUNTED-STANDBY PHYSICAL MANAGED

[oracle@paw-stand1 ~]$ sqlplus / as sysdba

SQL*Plus: Release 12.2.0.1.0 Production on Mon Mar 6 00:33:16 2017

Copyright (c) 1982, 2017, Oracle. All rights reserved.

Connected to an idle instance.

SQL> startup mount

ORACLE instance started. Total System Global Area 1610612736 bytes Fixed Size 2924928 bytes Variable Size 1040191104 bytes Database Buffers 553648128 bytes Redo Buffers 13848576 bytes Database mounted.

SQL> select open_mode from v$database;

OPEN_MODE -------------------- MOUNTED

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0

SQL> select member from v$logfile;

MEMBER --------------------------------------------------------------------- /u01/app/oracle/oradata/stand1/redo03.log /u01/app/oracle/oradata/stand1/redo02.log /u01/app/oracle/oradata/stand1/redo01.log

SQL> select name,open_mode,database_role,protection_mode from v$database;

NAME OPEN_MODE DATABASE_ROLE PROTECTION_MODE ------ -------------------- ---------------- -------------------- PROD1 READ ONLY PHYSICAL STANDBY MAXIMUM PERFORMANCE

SQL> show parameter db_unique_name

NAME TYPE VALUE -------------------- --------- ---------- ------------------------------ db_unique_name string stb1

SQL> select process,status,block#,blocks from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS --------- ------------ ---------- ---------- ARCH CONNECTED 0 0 ARCH CONNECTED 0 0 ARCH CONNECTED 0 0 ARCH CONNECTED 0 0 RFS IDLE 0 0 RFS IDLE 2805 1 RFS IDLE 0 0 RFS IDLE 0 0 8 rows selected.

SQL> set line 500

SQL> col value for a25

SQL> select name,value,datum_time,time_computed from v$dataguard_stats; NAME VALUE DATUM_TIME TIME_COMPUTED --------------- ----------------------- -------------------- -------------------- transport lag +00 00:41:00 03/06/2017 01:46:25 03/06/2017 01:46:39 apply lag 03/06/2017 01:46:39 apply finish time 03/06/2017 01:46:39 estimated startup time 12 03/06/2017 01:46:39

SQL> select dest_id,status,error from v$archive_dest where status<>’INACTIVE’;

DEST_ID STATUS ERROR ----------- --------- --------------------------------------------------------- 1 VALID 32 VALID

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ---------- --------- --------------- ---------------- ----------------------- ----- 1 VALID MOUNTED-STANDBY LOCAL IDLE

SQL> alter database recover managed standby database using archived logfile disconnect;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ---------- --------- --------------- -------- ------------------- --------------- 1 VALID MOUNTED-STANDBY LOCAL MANAGED

SQL> select dest_id,status,error from v$archive_dest where status<>’INACTIVE’;

DEST_ID STATUS ERROR ------------- ----------- ------------------------------------------------------ 1 VALID 32 VALID

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED -------------------------------- ------------------------ ------------------------ transport lag +00 00:53:00 03/06/2017 01:58:26 03/06/2017 01:59:22 apply lag +00 00:53:00 03/06/2017 01:58:26 03/06/2017 01:59:22 apply finish time +00 00:00:00.001 03/06/2017 01:59:22 estimated startup time 12 03/06/2017 01:59:22

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 9482 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> alter database recover managed standby database cancel;

Database altered.

SQL> alter database recover managed standby database disconnect;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ---------- --------- --------------- ---------------- ----------------------- ----- 1 VALID MOUNTED-STANDBY LOCAL MANAGED

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10531 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10535 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- -------- ------------ ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10538 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10539 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ---------- --------- --------------- ---------------- ----------------------- ----- 1 VALID MOUNTED-STANDBY LOCAL MANAGED

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10918 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 10937 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select dest_id,status,error from v$archive_dest where status<>’INACTIVE’;

DEST_ID STATUS ERROR ------------ ------------ -------------------------------------------------------- 1 VALID 32 VALID

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED ------------------------ --------------------------------- ------------------------ transport lag +00 01:03:00 03/06/2017 02:08:25 03/06/2017 02:09:26 apply lag +00 01:03:00 03/06/2017 02:08:25 03/06/2017 02:09:26 apply finish time +00 00:00:00.001 03/06/2017 02:09:26 estimated startup time 12 03/06/2017 02:09:26

SQL> alter database recover managed standby database cancel;

Database altered.

SQL> alter database recover managed standby database using current logfile disconnect;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR -------------- ---------- ---------------- --------- ------------------ ----------- 1 VALID MOUNTED-STANDBY LOCAL MANAGED

SQL> select * from v$standby_log;

no rows selected

SQL> alter database recover managed standby database cancel;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR -------------- --------- --------------- ------------ --------------- ----------- 1 VALID OPEN LOCAL IDLE

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=2;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ------------- ---------- ----------------- ---------- --------------- ------------- 2 VALID MOUNTED-STANDBY PHYSICAL MANAGED

SQL> select GROUP#,THREAD#,members,BYTES/1024/1024 from v$log;

GROUP# THREAD# MEMBERS BYTES/1024/1024 ----------- --------- -------- ---------------- 1 1 1 50 2 1 1 50 3 1 1 50

SQL> select open_mode from v$database;

OPEN_MODE -------------------- MOUNTED

SQL> alter database add standby logfile thread 1 ‘/u01/app/oracle/oradata/stb1/stbredo01.log’ size 55M;

Database altered.

SQL> alter database add standby logfile thread 1 ‘/u01/app/oracle/oradata/stb1/stbredo02.log’ size 55M;

Database altered.

SQL> alter database add standby logfile thread 1 ‘/u01/app/oracle/oradata/stb1/stbredo03.log’ size 55M;

Database altered.

SQL> alter database add standby logfile thread 1 ‘/u01/app/oracle/oradata/stb1/stbredo04.log’ size 55M;

Database altered.

SQL> select GROUP#,THREAD#,SEQUENCE#,status from v$standby_log;

GROUP# THREAD# SEQUENCE# STATUS ---------- ---------- ---------- ---------- 4 0 0 UNASSIGNED 5 0 0 UNASSIGNED 6 0 0 UNASSIGNED 7 0 0 UNASSIGNED

SQL> col member for a50

SQL> select GROUP#,member,type,status from v$logfile;

GROUP# MEMBER TYPE STATUS ---------- ------------------------------------------- -------- -------- 3 /u01/app/oracle/oradata/stand1/redo03.log ONLINE 2 /u01/app/oracle/oradata/stand1/redo02.log ONLINE 1 /u01/app/oracle/oradata/stand1/redo01.log ONLINE 4 /u01/app/oracle/oradata/stb1/stbredo01.log STANDBY 5 /u01/app/oracle/oradata/stb1/stbredo02.log STANDBY 6 /u01/app/oracle/oradata/stb1/stbredo03.log STANDBY 7 /u01/app/oracle/oradata/stb1/stbredo04.log STANDBY 7 rows selected.

SQL> alter database recover managed standby database using current logfile disconnect;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ------------ -------- ----------------- --------- ------------------------- ---- 1 VALID MOUNTED-STANDBY LOCAL MANAGED REAL TIME APPLY

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED ------------------------- ------------------------------ -------------------------- transport lag +00 01:29:00 03/06/2017 02:34:26 03/06/2017 02:34:33 apply lag +00 01:29:00 03/06/2017 02:34:26 03/06/2017 02:34:33 apply finish time +00 00:00:00.001 03/06/2017 02:34:33 estimated startup time 12 03/06/2017 02:34:33

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 12837 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> alter database recover managed standby database cancel;

Database altered.

SQL> alter database recover managed standby database using archived logfile disconnect;

Database altered.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 13046 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ------------------- ----------------- ----------- -------------------- ------------ 1 VALID MOUNTED-STANDBY LOCAL MANAGED

SQL> set line 400

SQL> col for value a25

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED ---------------------- ----------------- --------------------- -------------------- transport lag +00 01:35:01 03/06/2017 02:40:25 03/06/2017 02:40:27 apply lag +00 01:35:01 03/06/2017 02:40:25 03/06/2017 02:40:27 apply finish time +00 00:00:00.001 03/06/2017 02:40:27 estimated startup time 12 03/06/2017 02:40:27

SQL> alter database recover managed standby database cancel;

Database altered.

SQL> alter database recover managed standby database disconnect;

Database altered.

SQL> select dest_id,status,database_mode,type,recovery_mode,error from v$archive_dest_status where dest_id=1;

DEST_ID STATUS DATABASE_MODE TYPE RECOVERY_MODE ERROR ---------- --------- --------------- ----------- ----------------------- ----- 1 VALID MOUNTED-STANDBY LOCAL MANAGED REAL TIME APPLY

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED --------------------- ----------------- ------------------- ----------------- transport lag +00 01:35:01 03/06/2017 02:40:25 03/06/2017 02:41:20 apply lag +00 01:35:01 03/06/2017 02:40:25 03/06/2017 02:41:20 apply finish time +00 00:00:00.001 03/06/2017 02:41:20 estimated startup time 12 03/06/2017 02:41:20

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 RFS IDLE 0 0 0 RFS IDLE 13260 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 WAIT_FOR_LOG 0 0 0 9 rows selected.

SQL> alter system switch logfile;

System altered.

SQL> /

System altered.

SQL> /

System altered.

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CLOSING 1 3 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CLOSING 1 5 0 RFS IDLE 0 0 0 RFS IDLE 576 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 APPLYING_LOG 576 112640 0 9 rows selected.

SQL> select name,value,datum_time,time_computed from v$dataguard_stats;

NAME VALUE DATUM_TIME TIME_COMPUTED -------------------------------- -------------------------------------------------- transport lag +00 00:00:00 03/06/2017 02:43:35 03/06/2017 02:43:36 apply lag +00 00:00:00 03/06/2017 02:43:35 03/06/2017 02:43:36 apply finish time +00 00:00:00.000 03/06/2017 02:43:36 estimated startup time 12 03/06/2017 02:43:36

SQL> select process,status,block#,blocks,delay_mins from v$managed_standby;

PROCESS STATUS BLOCK# BLOCKS DELAY_MINS --------- ------------ ---------- ---------- ---------- ARCH CLOSING 1 3 0 ARCH CONNECTED 0 0 0 ARCH CONNECTED 0 0 0 ARCH CLOSING 1 5 0 RFS IDLE 0 0 0 RFS IDLE 599 1 0 RFS IDLE 0 0 0 RFS IDLE 0 0 0 MRP0 APPLYING_LOG 599 112640 0

Hope you enjoyed and learn some thing from my this post. Please do comment on this post if you liked it.

For converting standalone to RAC database, both the environments should be running on the same operating system and using the same oracle release. Oracle supports the following methods to convert a single-instance database to an RAC database:

With my post, I will demonstrate you to convert non ASM single instance database to RAC database by using the rconfig command-line tool. During the conversion, rconfig performs the following steps automatically:

In Oracle 11g R2, a single-instance database can either be converted to an administrator-managed cluster database or a policy-managed cluster database.

When you navigate through the $ORACLE_HOME/assistants/rconfig/sampleXMLS, you will find two sample XML input files.

While converting a single-instance database, with file system storage, to an RAC database with Automatic Storage Management (ASM), rconfig invokes RMAN internally to back up the database to proceed with converting non-ASM to ASM. Therefore, to make backup faster it is better to increase the PARALLELISM in configuration settings, which will use more RMAN channels in the RMAN on the local node and will make backup run faster and eventually reduces the conversion duration. For example, you may configure the following in the RMAN settings of pawdb database on the local node.

RMAN> CONFIGURE DEVICE TYPE DISK PARALLELISM 3;

[oracle@paw-racnode1 ~ ]$ srvctl config database -d pawdb

Database unique name: pawdb

Database name: pawdb

Oracle home: /u01/app/oracle/product/11.2.0/db_1

Oracle user: oracle

Spfile:

Domain:

Start options: open

Stop options: immediate

Database role: PRIMARY

Management policy: AUTOMATIC

Server pools: pawdb

Database instances: pawdb

Disk Groups:

Services:

Database is administrator managed

[grid@paw-racnode1 ~]$ srvctl status database -d pawdb

Instance pawdb is running on node paw-racnode1

[oracle@paw-racnode1 ~]$ . oraenv

ORACLE_SID = [orcl] ? pawdb

The Oracle base for ORACLE_HOME=/u01/app/oracle/product/11.2.0/db_1 is /u01/app/oracle

[oracle@paw-racnode1 ~]$ sqlplus / as sysdba

SQL*Plus: Release 11.2.0.1.0 Production on Sat Dec 10 16:33:52 2016

Copyright (c) 1982, 2009, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 – 64bit Production

With the Partitioning, Real Application Clusters, OLAP, Data Mining

and Real Application Testing options

SQL> select name from v$datafile;

NAME

————————————————————————–u01/app/oracle/oradata/pawdb/system01.dbf

/u01/app/oracle/oradata/pawdb/sysaux01.dbf

/u01/app/oracle/oradata/pawdb/undotbs01.dbf

/u01/app/oracle/oradata/pawdb/users01.dbf

/u01/app/oracle/oradata/pawdb/example01.dbf

[oracle@paw-racnode1 ~]$ cd $ORACLE_HOME/assistants/rconfig/sampleXMLs

[oracle@paw-racnode1 sampleXMLs]$ cp ConvertToRAC_AdminManaged.xml convert.xml

[oracle@paw-racnode1 sampleXMLs]$ cat convert.xml

<?xml version=”1.0″ encoding=”UTF-8″?>

<n:RConfig xmlns:n=”http://www.oracle.com/rconfig”

xmlns:xsi=”http://www.w3.org/2001/XMLSchema-instance”

xsi:schemaLocation=”http://www.oracle.com/rconfig”>

<n:ConvertToRAC>

<!– Verify does a precheck to ensure all pre-requisites are met, before the conversion is attempted. Allowable values are: YES|NO|ONLY –>

<n:Convert verify=”YES”>

<!–Specify current OracleHome of non-rac database for SourceDBHome –>

<n:SourceDBHome>/oracle/product/11.2.0/db_1</n:SourceDBHome>

<!–Specify OracleHome where the rac database should be configured. It can be same as SourceDBHome –>

<n:TargetDBHome>/oracle/product/11.2.0/db_1</n:TargetDBHome>

<!–Specify SID of non-rac database and credential. User with sysdba role is required to perform conversion –>

<n:SourceDBInfo SID=”orcl”>

<n:Credentials>

<n:User>sys</n:User>

<n:Password>oracle</n:Password>

<n:Role>sysdba</n:Role>

</n:Credentials>

</n:SourceDBInfo>

<!–Specify the list of nodes that should have rac instances running for the Admin Managed Cluster Database. LocalNode should be the first node in this nodelist. –>

<n:NodeList>

<n:Node name=”node1″/>

<n:Node name=”node2″/>

</n:NodeList>

<!–Instance Prefix tag is optional starting with 11.2. If left empty, it is derived from db_unique_name.–>

<n:InstancePrefix>sales</n:InstancePrefix>

<!– Listener details are no longer needed starting 11.2. Database is registered with default listener and SCAN listener running from Oracle Grid Infrastructure home. –>

<!–Specify the type of storage to be used by rac database. Allowable values are CFS|ASM. The non-rac database should have same storage type. ASM credentials are no needed for conversion. –>

<n:SharedStorage type=”ASM”>

<!–Specify Database Area Location to be configured for rac database.If this field is left empty, current storage will be used for rac database. For CFS, this field will have directory path. –>

<n:TargetDatabaseArea>+ASMDG</n:TargetDatabaseArea>

<!–Specify Flash Recovery Area to be configured for rac database. If this field is left empty, current recovery area of non-rac database will be configured for rac database. If current database is not using recovery Area, the resulting rac database will not have a recovery area. –>

<n:TargetFlashRecoveryArea>+ASMDG</n:TargetFlashRecoveryArea>

</n:SharedStorage>

[oracle@paw-racnode1 sampleXMLs]$ vi convert.xml

<?xml version=”1.0″ encoding=”UTF-8″?>

<n:RConfig xmlns:n=”http://www.oracle.com/rconfig”

xmlns:xsi=”http://www.w3.org/2001/XMLSchema-instance”

xsi:schemaLocation=”http://www.oracle.com/rconfig”>

<n:ConvertToRAC>

<!– Verify does a precheck to ensure all pre-requisites are met, before the conversion is attempted. Allowable values are: YES|NO|ONLY –>

<n:Convert verify=”YES“>

<!–Specify current OracleHome of non-rac database for SourceDBHome –>

<n:SourceDBHome>/u01/app/oracle/product/11.2.0/db_1</n:SourceDBHome>

<!–Specify OracleHome where the rac database should be configured. It can be same as SourceDBHome –>

<n:TargetDBHome>/u01/app/oracle/product/11.2.0/db_1</n:TargetDBHome>

<!–Specify SID of non-rac database and credential. User with sysdba role is required to perform conversion –>

<n:SourceDBInfo SID=”pawdb“>

<n:Credentials>

<n:User>sys</n:User>

<n:Password>sys</n:Password>

<n:Role>sysdba</n:Role>

</n:Credentials>

</n:SourceDBInfo>

<!–Specify the list of nodes that should have rac instances running for the Admin Managed Cluster Database. LocalNode should be the first node in this nodelist. –>

<n:NodeList>

<n:Node name=”paw-racnode1“/>

<n:Node name=”paw-racnode2“/>

</n:NodeList>

<!–Instance Prefix tag is optional starting with 11.2. If left empty, it is derived from db_unique_name.–>

<n:InstancePrefix>pawdb</n:InstancePrefix>

<!– Listener details are no longer needed starting 11.2. Database is registered with default listener and SCAN listener running from Oracle Grid Infrastructure home. –>

<!–Specify the type of storage to be used by rac database. Allowable values are CFS|ASM. The non-rac database should have same storage type. ASM credentials are no needed for conversion. –>

<n:SharedStorage type=”ASM“>

<!–Specify Database Area Location to be configured for rac database.If this field is left empty, current storage will be used for rac database. For CFS, this field will have directory path. –>

<n:TargetDatabaseArea>+PAWDB_DATA</n:TargetDatabaseArea>

<!–Specify Flash Recovery Area to be configured for rac database. If this field is left empty, current recovery area of non-rac database will be configured for rac database. If current database is not using recovery Area, the resulting rac database will not have a recovery area. –>

<n:TargetFlashRecoveryArea>+FRA</n:TargetFlashRecoveryArea>

</n:SharedStorage>

</n:Convert>

</n:ConvertToRAC>

</n:RConfig>

[oracle@paw-racnode1 sampleXMLs]$ rconfig convert.xml

Converting Database “pawdb” to Cluster Database. Target Oracle Home: /u01/app/oracle/product/11.2.0/db_1. Database Role: PRIMARY.

Setting Data Files and Control Files

Adding Database Instances

Adding Redo Logs

Enabling threads for all Database Instances

Setting TEMP tablespace

Adding UNDO tablespaces

Adding Trace files

Setting Flash Recovery Area

Updating Oratab

Creating Password file(s)

Configuring Listeners

Configuring related CRS resources

Starting Cluster Database

<?xml version=”1.0″ ?>

<RConfig version=”1.1″ >

<ConvertToRAC>

<Convert>

<Response>

<Result code=”0″ >

Operation Succeeded

</Result>

</Response>

<ReturnValue type=”object”>

<Oracle_Home>

/u01/app/oracle/product/11.2.0/db_1

</Oracle_Home>

<Database type=”ADMIN_MANAGED” >

<InstanceList>

<Instance SID=”pawdb1″ Node=”paw-racnode1″ >

</Instance>

<Instance SID=”pawdb2″ Node=”paw-racnode2″ >

</Instance>

</InstanceList>

</Database> </ReturnValue>

</Convert>

</ConvertToRAC></RConfig>

[oracle@paw-racnode1 sampleXMLs]$ ls -lrt $ORACLE_BASE/cfgtoollogs/rconfig/*.log

[oracle@paw-racnode1 sampleXMLs]$ tail -f $ORACLE_BASE/cfgtoollogs/rconfig/*.log

PAWDB =

(DESCRIPTION =

(ADDRESS = (PROTOCOL = TCP)(HOST = paw-racnode1.airydba.com)(PORT = 1521))

(CONNECT_DATA =

(SERVER = DEDICATED)

(SERVICE_NAME = pawdb)

)

)

PAWDB =

(DESCRIPTION =

(ADDRESS = (PROTOCOL = TCP)(HOST = paw-rac01-scan.airydba.com)(PORT = 1521))

(CONNECT_DATA =

(SERVER = DEDICATED)

(SERVICE_NAME = pawdb)

)

)

pawdb1: /u01/app/oracle/product/11.2.0/db_1

pawdb2: /u01/app/oracle/product/11.2.0/db_1

[oracle@paw-racnode1 sampleXMLs]$ srvctl status database -d pawdb

Instance pawdb1 is running on node pawracnode1

Instance pawdb2 is running on node paw-racnode2

[grid@paw-racnode1 sampleXMLs]$ crsctl stat res -t

-------------------------------------------------------------------------------- NAME TARGET STATE SERVER STATE_DETAILS -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.DATA.dg ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.FRA.dg ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.LISTENER.lsnr ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.OCR_DG.dg ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.PAWDB_DATA.dg ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.asm ONLINE ONLINE paw-racnode1 Started ONLINE ONLINE paw-racnode2 Started ora.eons ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.gsd OFFLINE OFFLINE paw-racnode1 OFFLINE OFFLINE paw-racnode2 ora.net1.network ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.ons ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 ora.registry.acfs ONLINE ONLINE paw-racnode1 ONLINE ONLINE paw-racnode2 -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE paw-racnode2 ora.LISTENER_SCAN2.lsnr 1 ONLINE ONLINE paw-racnode1 ora.LISTENER_SCAN3.lsnr 1 ONLINE ONLINE paw-racnode1 ora.oc4j 1 OFFLINE OFFLINE ora.orcl.db 1 OFFLINE OFFLINE ora.paw-racnode1.vip 1 ONLINE ONLINE paw-racnode1 ora.paw-racnode2.vip 1 ONLINE ONLINE paw-racnode2 ora.pawdb.db 1 ONLINE ONLINE paw-racnode1 Open 2 ONLINE ONLINE paw-racnode2 Open ora.racdb.db 1 OFFLINE OFFLINE 2 OFFLINE OFFLINE ora.scan1.vip 1 ONLINE ONLINE paw-racnode2 ora.scan2.vip 1 ONLINE ONLINE paw-racnode1 ora.scan3.vip 1 ONLINE ONLINE paw-racnode1

[grid@paw-racnode2 ~]$ su oracle

Password:

[oracle@paw-racnode2 grid]$ . oraenv

ORACLE_SID = [+ASM2] ? pawdb2

The Oracle base for ORACLE_HOME=/u01/app/oracle/product/11.2.0/db_1 is /u01/app/oracle

[oracle@paw-racnode2 grid]$ sqlplus / as sysdba

SQL*Plus: Release 11.2.0.1.0 Production on Sun Aug 11 18:31:48 2015

Copyright (c) 1982, 2009, Oracle. All rights reserved.

Connected to:

Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 – 64bit Production

With the Partitioning, Real Application Clusters, Automatic Storage Management, OLAP,

Data Mining and Real Application Testing options

SQL> select name from v$database;

NAME

———

PAWDB

SQL> select name from V$datafile;

NAME

————————————————————————–

+PAWDB_DATA/pawdb/datafile/system.256.930333443

+PAWDB_DATA/pawdb/datafile/sysaux.257.930333527

+PAWDB_DATA/pawdb/datafile/undotbs1.258.930333589

+PAWDB_DATA/pawdb/datafile/users.259.930333597

+PAWDB_DATA/pawdb/datafile/undotbs2.270.930333939

Okay, so we are able to recognize that single instance ( Non ASM ) database pawdb has been successfully converted into RAC database. Hope you enjoyed and learn some thing from my this post. Please do comment on this post if you liked it.

With reference to my previous blog post of GPnP Profile , there are two new additional components have been introduced in Oracle Clusterware 11g R2, one is GPnP profile and another one is Oracle local registry ( OLR ). GPnP Profile , we have already discussed. Through this post, I shall try to put some view on OLR.

OLR is an operating system file which resides on every node in the cluster and manages Oracle Clusterware configuration information for each particular node. It contains manageability information about Oracle Clusterware, including dependencies between various services. Oracle High Availability Services (OHASD) uses these information. This file contains the local registry information of node specific resources and is not shared by other nodes in the cluster. It is installed and configured at the time of OCR installation during Oracle clusterware installation. OLR is similar to OCR in terms of internal structure because it stores information in keys. Same tool can be used either check or dump the data of OLR, which we used to check and dump OCR.

OLR is located on local storage on each node in a cluster. Its default location is in the path $GRID_HOME/cdata/HOST_NAME.olr, where GRID_HOME is the Oracle Grid Infrastructure home, and HOST_NAME is the host name of the node. The location of OLR is stored in /etc/oracle/olr.loc and used by OHASD .

Prior to Oracle Clusterware 11gR2, the OCR and the voting disks ( Both are main CRS resources ) , were maintained in RAW or shared file system. As I have mentioned above , starting with Oracle cluster 11gR2, the Oracle clusterware related files ( OCR and Voting Disks ) can be stored in Oracle ASM, so for making cluster resources to be up, the ASM needs to be up and started but ASM itself is a resource managed by OHASD , so If ASM needs to be up, the clusterware components (OCR) should be up . This produces a contradictory situation because all the CRS and cluster resource information stored in OCR and OCR stored in ASM.

So to resolve this contradictory situation Oracle come up with two new node specific files in Oracle Clusterware 11g R2 i.e. one is GPnP profile and another one is Oracle local registry ( OLR ), both files separately maintain cluster specific components detail from other resources and services. As I have already told OLR is an locally available file on operating system, so there is no dependencies and this file could be read by any process with appropriate privileges.

The High Availability Services stack consists of daemons that communicate with their peers on the other nodes. As soon as the High Availability Services stack is up, the cluster node can join the cluster and use the shared components (e.g., the OCR). The startup sequence of the High Availability Services stack is stored partly in the Grid Plug and Play profile, but that sequence also depends on information stored in the OLR.

The OLR stores important security contexts information used by the Oracle High Availability Service, initially in the start sequence of Clusterware. The information stored in the OLR is needed by the Oracle High Availability Services daemon (OHASD) to start, includes data about GPnP wallets, Clusterware configuration, and version information. The information in the OLR and the Grid Plug and Play configuration file are needed to locate the voting disks. If they are stored in ASM, the ASM discovery string stored in the GPnP profile will be used by the cluster synchronization daemon to look them up.

There are some of the following information present in OLR:

Basically the information present in OLR deal with the OHASD process, whereas the information present in the OCR deal with the CRSD that means OHASD process mostly manages this OLR file. The OCR in turn is managed by the CRSD processes. This confirms that we need the OLR along with the GPnP profile, to start the Oracle High Availability Services stack.

If OLR is missing or corrupted then the clusterware resources will not start which in turn will not start the dependent components that means clusterware can’t be started on that node.

Hope, I have given above sufficient theory to understand what is Oracle Local Registry ( OLR ) and what its purpose of existence in Oracle Clusterware 11g R2. In my next post , I will explain the command line tools to access OLR.

[grid@paw-racnode1 ~]$ cd /u01/app/11.2.0/grid/gpnp/paw-racnode1/profiles/peer/

[grid@paw-racnode1 peer]$ ll

total 12

-rw-r–r– 1 grid oinstall 1905 Aug 7 13:57 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 13:55 profile_orig.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.xml

[grid@paw-racnode1 peer]$ pwd

/u01/app/11.2.0/grid/gpnp/paw-racnode1/profiles/peer

[grid@paw-racnode1 peer]$ gpnptool get

Warning: some command line parameters were defaulted. Resulting command line:

/u01/app/11.2.0/grid/bin/gpnptool.bin get -o-

ClusterName=”paw-rac-cluster” PALocation=””> HIz8dOjUIFB32YPkmXW2HMVazoY=L6GOD0rB03Hp+NoKVcIHb9/Rp3xznBKpUJGfixN/27Qo6IL8/4HkjSnzsbHf1IuK1SQfqV5624tygB0x9HJfVcW+k6E6cQWwAgZOzpPR3ltctD7XeikkXtt5TOWQ6boMvCKJ5mOwzGzuj4S/qDu7lWPBHM9EPzHAEn/8NOlDcDo=

Success.

[grid@paw-racnode1 peer]$ mv profile.xml profile.paw

[grid@paw-racnode1 peer]$ ll

total 12

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.paw

-rw-r–r– 1 grid oinstall 1905 Aug 7 13:57 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 13:55 profile_orig.xml

[grid@paw-racnode1 peer]$ su –

Password:

[root@paw-racnode1 ~]# crsctl stop crs

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.crsd’ on ‘paw-racnode1’

CRS-2790: Starting shutdown of Cluster Ready Services-managed resources on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.OCR_DG.dg’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.orcl.db’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.racdb.db’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.registry.acfs’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.LISTENER.lsnr’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.scan3.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.LISTENER.lsnr’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.paw-racnode1.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.scan3.vip’ on ‘paw-racnode1’ succeeded

CRS-2672: Attempting to start ‘ora.scan3.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.paw-racnode1.vip’ on ‘paw-racnode1’ succeeded

CRS-2672: Attempting to start ‘ora.paw-racnode1.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.scan2.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.scan2.vip’ on ‘paw-racnode1’ succeeded

CRS-2672: Attempting to start ‘ora.scan2.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.registry.acfs’ on ‘paw-racnode1’ succeeded

CRS-2676: Start of ‘ora.paw-racnode1.vip’ on ‘paw-racnode2’ succeeded

CRS-2676: Start of ‘ora.scan2.vip’ on ‘paw-racnode2’ succeeded

CRS-2676: Start of ‘ora.scan3.vip’ on ‘paw-racnode2’ succeeded

CRS-2672: Attempting to start ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode2’

CRS-2672: Attempting to start ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode2’

CRS-2676: Start of ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode2’ succeeded

CRS-2676: Start of ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.OCR_DG.dg’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.racdb.db’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.orcl.db’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.DATA.dg’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.FRA.dg’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.DATA.dg’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.FRA.dg’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.asm’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.asm’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.ons’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.eons’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.ons’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.net1.network’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.net1.network’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.eons’ on ‘paw-racnode1’ succeeded

CRS-2792: Shutdown of Cluster Ready Services-managed resources on ‘paw-racnode1’ has completed

CRS-2677: Stop of ‘ora.crsd’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.gpnpd’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.cssdmonitor’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.ctssd’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.evmd’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.asm’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.mdnsd’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.drivers.acfs’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.cssdmonitor’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.gpnpd’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.evmd’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.mdnsd’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.ctssd’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.drivers.acfs’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.asm’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.cssd’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.cssd’ on ‘paw-racnode1’ succeeded

CRS-2673: Attempting to stop ‘ora.diskmon’ on ‘paw-racnode1’

CRS-2673: Attempting to stop ‘ora.gipcd’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.gipcd’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.diskmon’ on ‘paw-racnode1’ succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on ‘paw-racnode1’ has completed

CRS-4133: Oracle High Availability Services has been stopped.

[root@paw-racnode1 ~]# cd /u01/app/11.2.0/grid/gpnp/paw-racnode1/profiles/peer/

[root@paw-racnode1 peer]# ll

total 12

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.paw

-rw-r–r– 1 grid oinstall 1905 Aug 7 13:57 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 13:55 profile_orig.xml

[root@paw-racnode1 peer]# crsctl start crs

CRS-4123: Oracle High Availability Services has been started.

Step4: Check whether pending.xml created:

[root@paw-racnode1 peer]# ll

total 16

-rw-r–r– 1 grid oinstall 1905 Nov 28 16:23 pending.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.paw

-rw-r–r– 1 grid oinstall 1905 Aug 7 13:57 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 13:55 profile_orig.xml

[root@paw-racnode1 peer]# cat pending.xml

HIz8dOjUIFB32YPkmXW2HMVazoY=L6GOD0rB03Hp+NoKVcIHb9/Rp3xznBKpUJGfixN/27Qo6IL8/4HkjSnzsbHf1IuK1SQfqV5624tygB0x9HJfVcW+k6E6cQWwAgZOzpPR3ltctD7XeikkXtt5TOWQ6boMvCKJ5mOwzGzuj4S/qDu7lWPBHM9EPzHAEn/8NOlDcDo=

[root@paw-racnode1 peer]# ll

total 16

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.paw

-rw-r–r– 1 grid oinstall 1905 Aug 7 13:57 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 13:55 profile_orig.xml

Conclusion 1: Whenever GPnP profile is not present, it is automatically copied from existing nodes by GPnPd as pending.xml. As you can see entries are same as was in profile.xml. This pending.xml automatically disappears , when all services and resources of cluster comes up.

[root@paw-racnode1 peer]# crsctl check cluster -all

**************************************************************

paw-racnode1:

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

**************************************************************

paw-racnode2:

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

**************************************************************

[grid@paw-racnode2 ~]$ su –

Password:

[root@paw-racnode2 ~]# cd /u01/app/11.2.0/grid/gpnp/paw-racnode2/profiles/peer

[root@paw-racnode2 peer]# ll

total 12

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:05 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 14:05 profile_orig.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.xml

[root@paw-racnode2 peer]# crsctl stop crs

CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.crsd’ on ‘paw-racnode2’

CRS-2790: Starting shutdown of Cluster Ready Services-managed resources on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.OCR_DG.dg’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.VOTE_DG.dg’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.VOTE_EXT.dg’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.VOTE_HI.dg’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.racdb.db’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.registry.acfs’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.LISTENER.lsnr’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.scan3.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.scan2.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.scan3.vip’ on ‘paw-racnode2’ succeeded

CRS-2672: Attempting to start ‘ora.scan3.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.scan2.vip’ on ‘paw-racnode2’ succeeded

CRS-2672: Attempting to start ‘ora.scan2.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.LISTENER.lsnr’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.paw-racnode2.vip’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.paw-racnode2.vip’ on ‘paw-racnode2’ succeeded

CRS-2672: Attempting to start ‘ora.paw-racnode2.vip’ on ‘paw-racnode1’

CRS-2677: Stop of ‘ora.VOTE_EXT.dg’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.registry.acfs’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.VOTE_HI.dg’ on ‘paw-racnode2’ succeeded

CRS-2676: Start of ‘ora.paw-racnode2.vip’ on ‘paw-racnode1’ succeeded

CRS-2676: Start of ‘ora.scan2.vip’ on ‘paw-racnode1’ succeeded

CRS-2676: Start of ‘ora.scan3.vip’ on ‘paw-racnode1’ succeeded

CRS-2672: Attempting to start ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode1’

CRS-2672: Attempting to start ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode1’

CRS-2676: Start of ‘ora.LISTENER_SCAN3.lsnr’ on ‘paw-racnode1’ succeeded

CRS-2676: Start of ‘ora.LISTENER_SCAN2.lsnr’ on ‘paw-racnode1’ succeeded

CRS-2677: Stop of ‘ora.OCR_DG.dg’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.VOTE_DG.dg’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.racdb.db’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.DATA.dg’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.FRA.dg’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.FRA.dg’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.DATA.dg’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.asm’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.asm’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.eons’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.ons’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.ons’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.net1.network’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.net1.network’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.eons’ on ‘paw-racnode2’ succeeded

CRS-2792: Shutdown of Cluster Ready Services-managed resources on ‘paw-racnode2’ has completed

CRS-2677: Stop of ‘ora.crsd’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.cssdmonitor’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.ctssd’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.evmd’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.asm’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.drivers.acfs’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.mdnsd’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.cssdmonitor’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.mdnsd’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.ctssd’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.evmd’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.drivers.acfs’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.asm’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.cssd’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.cssd’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.gpnpd’ on ‘paw-racnode2’

CRS-2673: Attempting to stop ‘ora.diskmon’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.gpnpd’ on ‘paw-racnode2’ succeeded

CRS-2673: Attempting to stop ‘ora.gipcd’ on ‘paw-racnode2’

CRS-2677: Stop of ‘ora.gipcd’ on ‘paw-racnode2’ succeeded

CRS-2677: Stop of ‘ora.diskmon’ on ‘paw-racnode2’ succeeded

CRS-2793: Shutdown of Oracle High Availability Services-managed resources on ‘paw-racnode2’ has completed

CRS-4133: Oracle High Availability Services has been stopped.

[root@paw-racnode2 peer]# crsctl start crs

CRS-4123: Oracle High Availability Services has been started.

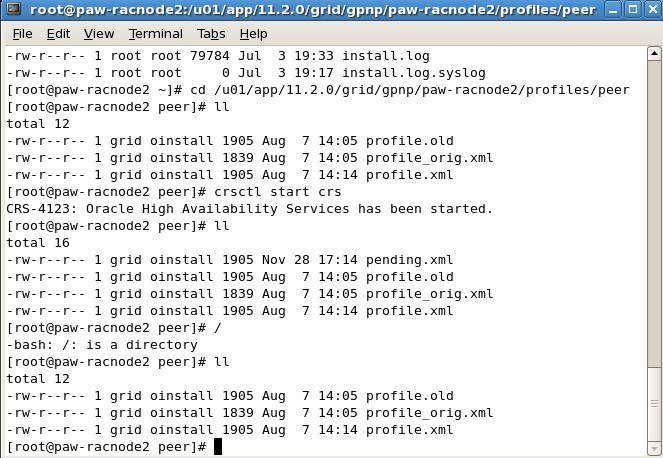

Step 4: Check whether pending.xml created:

[root@paw-racnode2 peer]# ll

total 16

-rw-r–r– 1 grid oinstall 1905 Nov 28 17:14 pending.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:05 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 14:05 profile_orig.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.xml

[root@paw-racnode2 peer]# ll

total 12

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:05 profile.old

-rw-r–r– 1 grid oinstall 1839 Aug 7 14:05 profile_orig.xml

-rw-r–r– 1 grid oinstall 1905 Aug 7 14:14 profile.xml

Attached Image to justify My Practical:

Final Conclusion: GPnPd always creates pending.xml, whether profile.xml exist or not. This pending.xml automatically disappears, when all services and resources of cluster comes up.

With reference to my OCR and Voting Disk Blog post, In Oracle 11g R2 RAC, we can store OCR and Voting disk in ASM, but clusterware needs OCR and Voting disk to start CRSD and CSSD process but point is, both OCR and Voting disk are stored in ASM, which itself is a resource for the nodes that means CRSD and CSSD process needs the OCR and Voting file before the ASM startup. So the question arise ” how the clusterware will start?”, we shall find the answer of this question in this same document, just wait..

To resolve this issue Oracle introduced two new node specific files OLR & GPnP, in Oracle 11g R2.

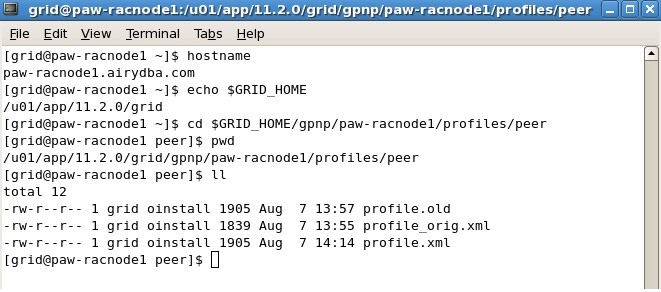

Now If we talk about GPnP profile, This GPnP profile is a new feature included in Oracle 11g R2.The GPnP profile is a small XML file located in

$GRID_HOME/gpnp//profiles/peer with name profile.xml.

Each node of the cluster maintains a local copy of this profile and is maintained by GPnP daemon along with mdns daemon . GPnP deamon ensures the synchronization of GPnP profile across all the nodes in the cluster and GPnP profile is used by clusterware to establish the correct global personality of a node. it cannot be stored on ASM as it is required prior to start of ASM. Hence, it is stored locally on each node and is kept synchronized across all the nodes by GPnPd.

When a node of an Oracle Clusterware cluster restarts, OHASD is started by platform-specific means, OHASD has access to the OLR (Oracle Local Registry) stored on the local file system. OLR provides needed data to complete OHASD initialization. OHASD brings up GPnP Daemon and CSS Daemon. CSS Daemon has access to the GPNP Profile stored on the local file system. The information regarding voting disk is on ASM , is read from GPnP profile i.e.

We can even read voting disk by using kfed utility ,even if ASM is not up.

In next step, the clusterware checks whether all the nodes have the updated GPnP profile and the nodes joins the cluster based on the GPnP configuration . Whenever a node is started or added to the cluster, the clusterware software on the starting node starts a GPnP agent and perform following task.

The Voting Files locations on ASM Disks are accessed by CSSD with well-known pointers in the ASM Disk headers and CSSD is able to complete initialization and start or join an existing cluster.

Now OHASD starts an ASM instance and ASM can now operate with initialized and operating CSSD.

With, an ASM instance running and its Diskgroup mounted, access to Clusterware’s OCR is available to CRSD (CRSD needs to read OCR to startup various resources on the node and hence update it, as status of resources changes )Now OHASD starts CRSD with access to the OCR in an ASM Diskgroup and thus Clusterware completes initialization and brings up other services under its control.

The ASM instance uses special code to locate the contents of the ASM SPFILE , which is stored in a Diskgroup.

Next. Since OCR is also on ASM, location of ASM spfile should be known. The order of searching the ASM SPfile is

ASM spfile is stored in ASM. But to start ASM, we’ll need spfile. Oracle know spfile location from GPnP profile & it reads spfile flag from underlying disk(s) and then starts the ASM.

Thus with the use of GPnP profile stores several information. GPnP profile information along with the information in the OLR have enough information , that have sufficient to automate several tasks or eased for the administrators and also the dependency on OCR is gradually reduced but not eliminated.

GPnP profile defines a node’s metadata about:

it contains digital signature information of the provisioning authority because the profile is security sensitive. It might identify the storage to be used as the root partition of a machine. This profile is protected by a wallet against modification. As in my case the WALLET information can be found in : /u01/app/11.2.0/grid/gpnp/paw-racnode1/wallets/peer “OR” /u01/app/11.2.0/grid/gpnp/wallets/peer .

If you have to manually modify the profile, it must first be unsigned with $GRID_HOME/bin/gpnptool, modified, and then signed again with the same utility, however there is a very slight chance you would ever be required to do so.

Now we can use the gpnptool with get option to dump this xml file into standard output. Below is the formatted output .

[grid@paw-racnode1 peer]$ pwd

/u01/app/11.2.0/grid/gpnp/paw-racnode1/profiles/peer

[grid@paw-racnode1 peer]$ gpnptool get

Warning: some command line parameters were defaulted. Resulting command line:

/u01/app/11.2.0/grid/bin/gpnptool.bin get -o-

<?xml version=”1.0″ encoding=”UTF-8″?><gpnp:GPnP-Profile Version=”1.0″ xmlns=”http://www.grid-pnp.org/2005/11/gpnp-profile” xmlns:gpnp=”http://www.grid-pnp.org/2005/11/gpnp-profile” xmlns:orcl=”http://www.oracle.com/gpnp/2005/11/gpnp-profile” xmlns:xsi=”http://www.w3.org/2001/XMLSchema-instance” xsi:schemaLocation=”http://www.grid-pnp.org/2005/11/gpnp-profile gpnp-profile.xsd” ProfileSequence=”5″ ClusterUId=”1c12005940a3efa8bf244ccd47060927″ ClusterName=”paw-rac-cluster“ PALocation=””><gpnp:Network-Profile><gpnp:HostNetwork id=”gen” HostName=”*”><gpnp:Network id=”net1″ IP=”192.168.75.0″ Adapter=”eth0″ Use=”public”/><gpnp:Network id=”net2″ IP=”10.0.0.0″ Adapter=”eth1″ Use=”cluster_interconnect”/></gpnp:HostNetwork></gpnp:Network-Profile><orcl:CSS-Profile id=”css” DiscoveryString=”+asm” LeaseDuration=”400″/><orcl:ASM-Profile id=”asm” DiscoveryString=”/dev/oracleasm/disks” SPFile=”+DATA/paw-rac-cluster/asmparameterfile/registry.253.919259819″/><ds:Signature xmlns:ds=”http://www.w3.org/2000/09/xmldsig#”><ds:SignedInfo><ds:CanonicalizationMethod Algorithm=”http://www.w3.org/2001/10/xml-exc-c14n#”/><ds:SignatureMethod Algorithm=”http://www.w3.org/2000/09/xmldsig#rsa-sha1″/><ds:Reference URI=””><ds:Transforms><ds:Transform Algorithm=”http://www.w3.org/2000/09/xmldsig#enveloped-signature”/><ds:Transform Algorithm=”http://www.w3.org/2001/10/xml-exc-c14n#”> <InclusiveNamespaces xmlns=”http://www.w3.org/2001/10/xml-exc-c14n#” PrefixList=”gpnp orcl xsi”/></ds:Transform></ds:Transforms><ds:DigestMethod Algorithm=”http://www.w3.org/2000/09/xmldsig#sha1″/><ds:DigestValue>HIz8dOjUIFB32YPkmXW2HMVazoY=</ds:DigestValue></ds:Reference></ds:SignedInfo><ds:SignatureValue>L6GOD0rB03Hp+NoKVcIHb9/Rp3xznBKpUJGfixN/27Qo6IL8/4HkjSnzsbHf1IuK1SQfqV5624tygB0x9HJfVcW+k6E6cQWwAgZOzpPR3ltctD7XeikkXtt5TOWQ6boMvCKJ5mOwzGzuj4S/qDu7lWPBHM9EPzHAEn/8NOlDcDo=</ds:SignatureValue></ds:Signature></gpnp:GPnP-Profile>

Success.

GPnP daemon replicates changes to the profile during

Profile is automatically updated Whenever changes are made to a cluster during installation and with configuration tools like

I hope the above information will help you to understand the Grid plug and play ( GPnP ) profile.

gpnptool Commands to access GPnP Profile:

[grid@paw-racnode1 peer]$ pwd

/u01/app/11.2.0/grid/gpnp/paw-racnode1/profiles/peer

[grid@paw-racnode1 peer]$ gpnptool get

Warning: some command line parameters were defaulted. Resulting command line:

/u01/app/11.2.0/grid/bin/gpnptool.bin get -o-

<?xml version=”1.0″ encoding=”UTF-8″?><gpnp:GPnP-Profile Version=”1.0″ xmlns=”http://www.grid-pnp.org/2005/11/gpnp-profile” xmlns:gpnp=”http://www.grid-pnp.org/2005/11/gpnp-profile” xmlns:orcl=”http://www.oracle.com/gpnp/2005/11/gpnp-profile” xmlns:xsi=”http://www.w3.org/2001/XMLSchema-instance” xsi:schemaLocation=”http://www.grid-pnp.org/2005/11/gpnp-profile gpnp-profile.xsd” ProfileSequence=”5″ ClusterUId=”1c12005940a3efa8bf244ccd47060927″ ClusterName=”paw-rac-cluster“ PALocation=””><gpnp:Network-Profile><gpnp:HostNetwork id=”gen” HostName=”*”><gpnp:Network id=”net1″ IP=”192.168.75.0″ Adapter=”eth0″ Use=”public”/><gpnp:Network id=”net2″ IP=”10.0.0.0″ Adapter=”eth1″ Use=”cluster_interconnect”/></gpnp:HostNetwork></gpnp:Network-Profile><orcl:CSS-Profile id=”css” DiscoveryString=”+asm” LeaseDuration=”400″/><orcl:ASM-Profile id=”asm” DiscoveryString=”/dev/oracleasm/disks” SPFile=”+DATA/paw-rac-cluster/asmparameterfile/registry.253.919259819″/><ds:Signature xmlns:ds=”http://www.w3.org/2000/09/xmldsig#”><ds:SignedInfo><ds:CanonicalizationMethod Algorithm=”http://www.w3.org/2001/10/xml-exc-c14n#”/><ds:SignatureMethod Algorithm=”http://www.w3.org/2000/09/xmldsig#rsa-sha1″/><ds:Reference URI=””><ds:Transforms><ds:Transform Algorithm=”http://www.w3.org/2000/09/xmldsig#enveloped-signature”/><ds:Transform Algorithm=”http://www.w3.org/2001/10/xml-exc-c14n#”> <InclusiveNamespaces xmlns=”http://www.w3.org/2001/10/xml-exc-c14n#” PrefixList=”gpnp orcl xsi”/></ds:Transform></ds:Transforms><ds:DigestMethod Algorithm=”http://www.w3.org/2000/09/xmldsig#sha1″/><ds:DigestValue>HIz8dOjUIFB32YPkmXW2HMVazoY=</ds:DigestValue></ds:Reference></ds:SignedInfo><ds:SignatureValue>L6GOD0rB03Hp+NoKVcIHb9/Rp3xznBKpUJGfixN/27Qo6IL8/4HkjSnzsbHf1IuK1SQfqV5624tygB0x9HJfVcW+k6E6cQWwAgZOzpPR3ltctD7XeikkXtt5TOWQ6boMvCKJ5mOwzGzuj4S/qDu7lWPBHM9EPzHAEn/8NOlDcDo=</ds:SignatureValue></ds:Signature></gpnp:GPnP-Profile>

Success.

[grid@paw-racnode1 peer]$ gpnptool getpval -asm_spf

Warning: some command line parameters were defaulted. Resulting command line:

/u01/app/11.2.0/grid/bin/gpnptool.bin getpval -asm_spf -p=profile.xml -o-

+DATA/paw-rac-cluster/asmparameterfile/registry.253.919259819

[grid@paw-racnode1 peer]$ gpnptool getpval -asm_dis

Warning: some command line parameters were defaulted. Resulting command line:

/u01/app/11.2.0/grid/bin/gpnptool.bin getpval -asm_dis -p=profile.xml -o-

/dev/oracleasm/disks

[grid@paw-racnode1 peer]$ gpnptool find

Found 2 instances of service ‘gpnp’.

mdns:service:gpnp._tcp.local.://paw-racnode2:64098/agent=gpnpd,cname=paw-rac-cluster,host=paw-racnode2,pid=6444/gpnpd h:paw-racnode2 c:paw-rac-cluster

mdns:service:gpnp._tcp.local.://paw-racnode1:55790/agent=gpnpd,cname=paw-rac-cluster,host=paw-racnode1,pid=6677/gpnpd h:paw-racnode1 c:paw-rac-cluster

I hope the above information will help you to understand the Grid plug and play ( GPnP ) profile.

Click on below given link to download the document: